(with contributions by Alexis Clancy)

* * * * * * * * * * * * * * * * * * * * * * * * * * * *

1. Inspiration in the work of Walter M. Elsasser

Towards a Truly Scientific Biology

In a great book in the budding field of Holistic Biology (Reflections on a Theory of Organisms: Holism in Biology), Walter M. Elsasser argues that the task of elaborating a truly scientific biology still lies ahead of us.Physics and chemistry, in their current states of knowledge, are truly scientific, according to Elsasser. Physics, for example, reached scientific status with the unfolding of the twentieth-century theoretical systems of quantum mechanics and relativity. Biology, on the other hand – molecular, evolutionary and genetic biology – is not scientific. It is reductionist. Current biological paradigms reduce our understanding of the living organism to a combinatorial model or formula such as the genetic code. But the genetic message is only a symbol of the complete reproductive process. “The message of the genetic code,” writes Elsasser, “does not amount to a complete and exhaustive information sequence that would be sufficient to reconstruct the new organism on the basis of coded data alone.” Based on this, we place into question the very concept of data.

The Cartesian Method vs. Complementarity

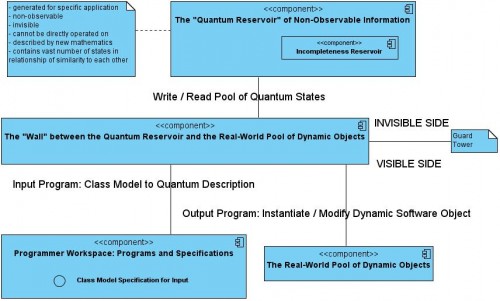

This reductionism on the part of biologists corresponds to the computational paradigm of binary or digital computing that has been available to us in the twentieth century. It is almost as if the biologists decided, since this is the limit of the computing power that we have, we will devise a biology that functions within the restrictions of what we can compute. It is the question of how do we deal with complexity. Within the existing or dominant computing paradigm, in order to deal with a complex problem, we break down the problem into smaller, more manageable parts. This is essentially the Cartesian Method. But it is impossible to apply the Cartesian Method to quantum-mechanical generalized complementarities like the wave-particle duality or the Heisenberg Uncertainty Principle. Whereas the Cartesian method may work for mechanical systems, it cannot be of much use when we aspire to the understanding or creation of something that is living. The more correct approach that would correspond to a breakthrough into twenty-first century science would be to identify relationships of similarity, to find samples or patterns that capture something of the vitality and complexity of the whole without breaking it down in a reductionist way.

Holistic Information is Real

We need a Holistic Biology where we consider the living organism in its true complexity. The structural complexity of even a single living cell is ‘transcomputational’. Elsasser writes that the computational problem of really scientifically grasping a living organism (or organic structure) is a problem of unfathomable complexity. The single living cell is involved in a network of relationships with all life on the planet, with the planet itself, and with the history of life. The individual member of a species decodes in real-time, as it faces each new circumstance, its species-memory. It creatively retrieves this species-memory through a process of information transfer that is effectively ‘invisible’, and does not take place via any intermediate storage or transmission media. Holistic information transfer happens over space and time, “without there being any intervening medium or process that carries the information.” Whereas the genetic code is memory considered as ‘homogeneous replication’, holistic memory is one of ‘heterogeneous reproduction’.

Birth of a New Machine

Although Elsasser’s book is ostensibly about Holistic Biology, I read it as being a blueprint for Computer Science 2.0. Starting from Elsasser’s ideas about the living organism considered as an information system, and his reflections on how this information system actually works in the living being, we develop the design and construction of a new kind of computer. What we are in fact concerned with is a new kind of software system. At this early stage of the work, it does not yet have anything to do with hardware. But I find it reasonable to speak about this new kind of software system as being a new kind of computer.

Identities and Differences Make Static Software

One of our basic insights is that currently existing software is based on a logic of discrete identities and discrete differences. Given this logic, the instantiated software object remains essentially static. The properties of the software object are given to it at the time of its inception or its “construction” as object. Its blueprint or definition lies within the software classes which provide it with its finite number of states (as represented, for example, in the graphical artefact of the state machine diagram), and a definite identity and definite properties. This produces an essentially static system. There is no real dimension of time. The linearity of time is the mere playing out of something static. The software object stays what it is throughout its lifetime, until the programmer deletes it, or the system shuts it or itself down.

…